A police chief has admitted artificial intelligence used to boost crime fighting will contain bias but pledged to combat the risks.Labour wants a dramatic expansion of police use of AI within England and

Wales, with police chiefs also believing it could help keep law enforcement up to date with new criminal threats.

Alex Murray told the Guardian that a new national police AI centre would recognise the risks of bias and minimise them.Bias in use of AI in policing could result in instances where algorithms – often trained on historical data reflecting past human prejudices – systematically produce unfair outcomes, such as overtargeting minority communities or misidentifying individuals based on race, gender, or socioeconomic status.Murray, the director of threat leadership with the

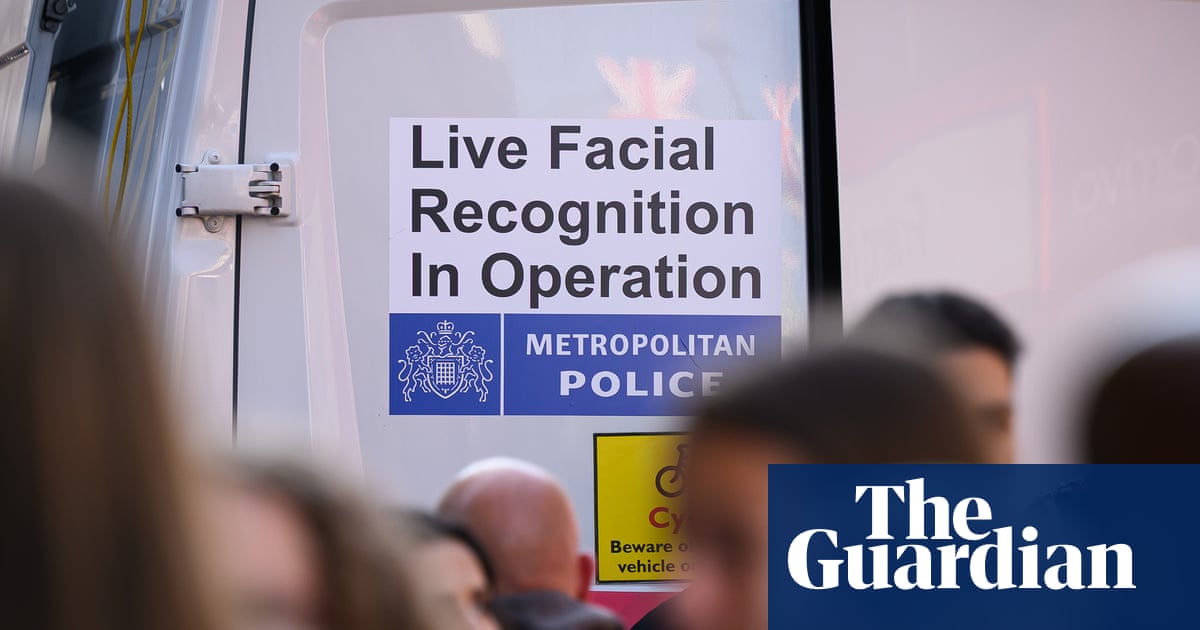

National Crime Agency, and the national lead for AI, said: “Once you’ve recognised and minimised [bias], how do you train officers to deal with outputs to ensure that it is further minimised?“If you talk about live facial recognition or predictive policing, there will be bias, and you need to get in the data scientists and the data engineers to clean the data, to train the model appropriately, and then to test it.“There is no point releasing something to policing that has bias in it that’s not recognised, and everything should be done to minimise it to a level where it can be understood and mitigated.”Examples of bias have already surfaced in the police use of retrospective facial recognition, which is powered by AI. That is where a suspect is compared with a database of images after a crime.

Alex Murray of the

National Crime Agency.Live facial recognition, which is more controversial and is used less by policing, hunts for suspects in real time, and also contains bias. A report in December found that a retrospective facial recognition system used by police had been used with inadequate safeguards.The Association of Police and Crime Commissioners (APCC), which oversees local forces in England and

Wales, said: “System failures have been known for some time, yet these were not shared with those communities affected, nor with leading sector stakeholders.”The APCC forensic science lead, Darryl Preston, who is the police and crime commissioner for

Cambridgeshire, said: “The discovery of an in-built bias in the police national database’s retrospective facial recognition system, even if only in limited circumstances, demonstrates the need for independent oversight of these powerful tools.“It is not acceptable for technology to be used unless and until it has been thoroughly tested to eliminate bias. That clearly was not the case in this instance.”The new national AI centre, costing £115m, would aim to reduce bias, said Murray, as well as assessing and deciding what products from private suppliers work. Currently each of the forces across the UK makes its own decisions, which is seen as slow and wasteful.Murray said police were in an “arms race” with criminals who were using the technology: “Anyone with imagination can use AI.”In one case a paedophile claimed images showing him involved in the abuse of children was a deepfake, which police then had to disprove to get him convicted.Murray said the benefits of AI were far beyond the “cliche around Minority Report and predictive policing”.He added that across a range of crimes and challenges facing policing, AI ranged from being a help to a gamechanger, but a human police officer will have to make the final decisions about what to do about the results AI produces.He said it could help police deal with political agitators who infect social media with fake images to try to trigger violence on the streets.In time, Murray said, it could help with manhunts, or speed up searches for cars linked to suspects and save the hundreds of hours it takes for detectives to trawl through extensive CCTV footage, or speed up the search of seized digital devices from suspects in the hunt for incriminating evidence.“What took days, weeks, sometimes months can potentially take hours,” he said.In one recent case, four Luton-based suspects were arrested for attacks on – and thefts from – cashpoints. Police downloaded the data from the suspects’ phones and, thanks to AI, secured guilty pleas within weeks.The data was in Romanian and AI scoured through it, translated it, identified the material relating to potential crimes, identified the offences and presented it all in a package for detectives.Trevor Rodenhurst, chief constable of the Bedfordshire force, told the Guardian: “This allowed us to draw evidence from lots of devices with a vast quantity of data, which we would otherwise not have been able to do.”Rodenhurst said that as officers use AI and see its benefits, it is changing the view of the frontline: “They are no longer suspicious, they are asking when they can have it. That capability is transformative.”