How Nvidia’s inference bet at GTC poses a challenge and opportunity for China

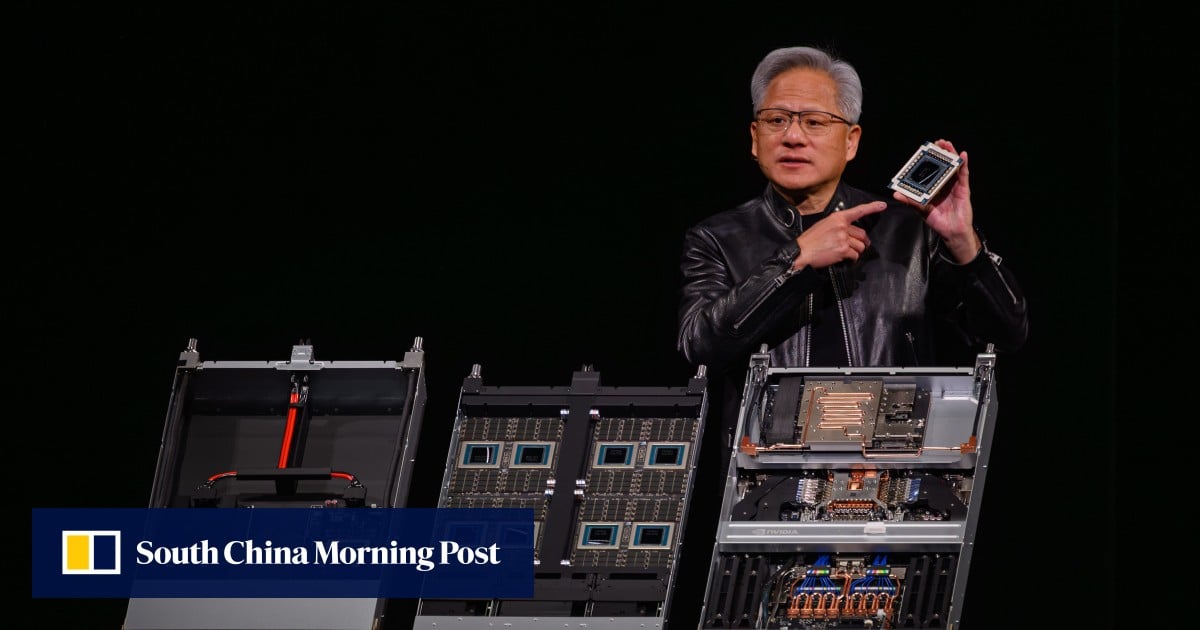

Nvidia unveiled its Groq 3 Language Processing Unit (LPU) at GTC 2026 in San Jose, California, designed for AI agentic systems. The LPU, integrated into the Vera Rubin platform, aims to create "AI factories" by combining CPUs, GPUs, and LPUs.

Briefing Summary

AI-generatedNvidia unveiled its Groq 3 Language Processing Unit (LPU) at GTC 2026 in San Jose, California, designed for AI agentic systems. The LPU, integrated into the Vera Rubin platform, aims to create "AI factories" by combining CPUs, GPUs, and LPUs. Analysts suggest this advancement widens the gap between Nvidia and Chinese chipmakers, moving beyond individual chip performance to system-level dominance. Experts note Chinese chips lag in hardware and AI production pipeline standardization. However, the fragmented AI inference market presents an opportunity for Chinese chipmakers to focus on specialized AI workloads outside of data centers.

Article analysis

Model · rule-basedKey claims

5 extractedNvidia is moving from selling individual chips to selling “AI factories” with the Vera Rubin platform.

The LPU is designed for agentic systems and relies on inference workloads.

Nvidia introduced the Groq 3 Language Processing Unit (LPU) at GTC 2026.

Chinese domestic chips face a lag in hardware specifications and AI production pipeline standardization.

The gap between Nvidia and its Chinese rivals is widening.