OA

Online Safety Act

EventUK's Online Safety Act faces scrutiny as Ofcom navigates regulation of social media, AI, and online content.

Total Coverage:14 articles

Last 7 Days:0

Event Overview

The UK's Online Safety Act, overseen by Ofcom, is a key piece of legislation regulating social media and online content. It's newsworthy due to several recent developments. Ian Cheshire has been appointed as the new chair of Ofcom, taking on the challenge of implementing the Act. There are calls for Ofcom to protect men and boys from harmful online content, specifically from 'manosphere' influencers. The Act's reach extends to AI, with potential fines or bans for AI chatbots that endanger children. Concerns have also been raised regarding AI generating offensive content, as seen with Grok AI on X. Ofcom is also facing pressure to clarify its stance on content related to Palestine Action. Furthermore, potential social media bans and restrictions for teenagers are being explored. A suicide forum has been provisionally ruled in breach of the Act for failing to block UK users. The Act's implementation and scope are under constant debate and scrutiny, making it a highly relevant and significant issue in the UK.

Last updated: April 29, 2026

Summary Evolution

Coverage Timeline

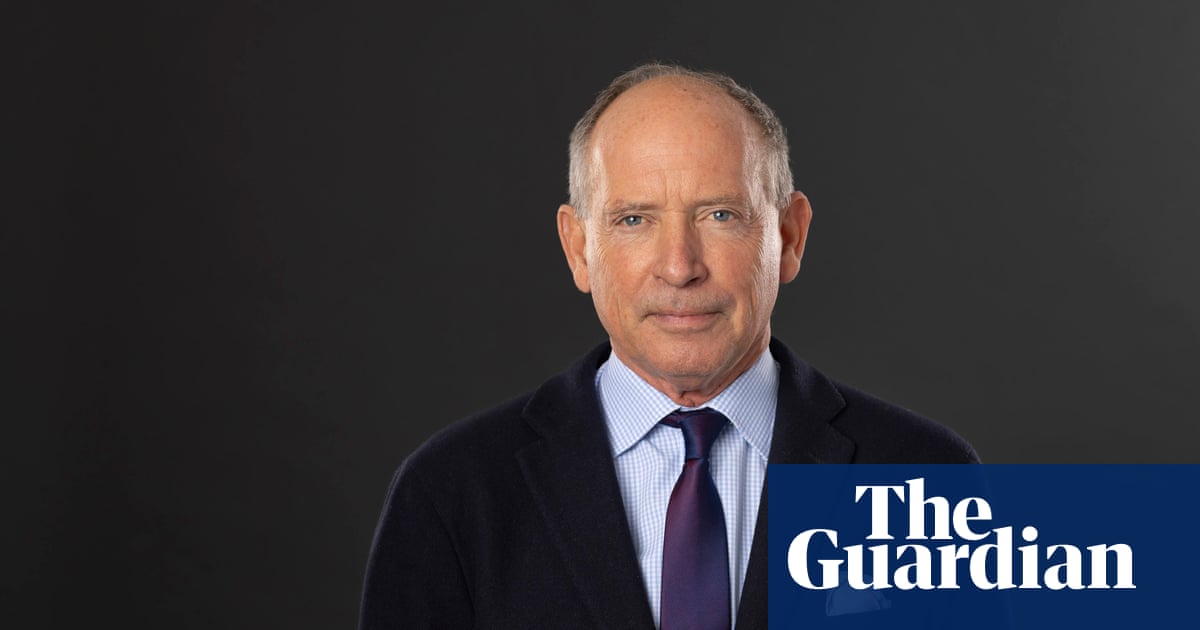

City veteran Ian Cheshire named as new chair of Ofcom

Protect men and boys from manosphere influencers, Labour MPs tell Ofcom

OpenAI delays ‘adult mode’ for ChatGPT to focus on work of higher priority

Liverpool and Manchester United complain to X over ‘sickening’ Grok AI posts

Ofcom urged to clarify if Palestine Action content should still be removed online

Hundreds of UK teenagers to pilot social media bans and restrictions

Suicide forum in breach of Online Safety Act after failing to block UK users

Starmer says government remains ‘open-minded’ about social media ban for under-16s – UK politics live